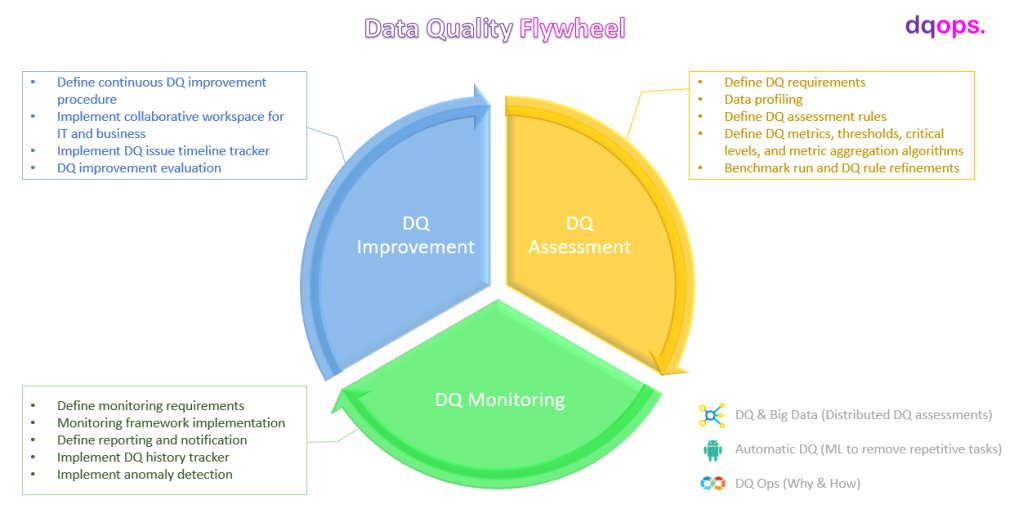

In this blog series I plan to write about data quality improvement from a data engineer’s perspective. I plan this blog series to cover not only data quality concepts, methodologies, procedures but also to case study the architectural designs of some data quality management platforms and deep dive into technical details for implementing a data quality management solution. In addition, I am keen to cover some areas I have been exploring recently, such as data quality assessment of big data, automatic DQ, and DQ Ops.

This blog post aims to set the scene up for my data quality improvement journey, focusing on the motivation behind data quality improvement, the natures of data quality, and the requirements for data quality improvement.

Data Quality, Why?

Firstly, organisations are overoptimistic with their data quality and underestimate the loss caused by data quality issues. However, the reality is:

The average financial impact of poor data quality on organizations is $9.7 million per year.

Gartner

In the US alone, businesses lose $3.1 trillion annually due to poor data quality

IBM

Organizations make (often erroneous) assumptions about the state of their data and continue to experience inefficiencies, excessive costs, compliance risks and customer satisfaction issues as a result

Gartner

Secondly, the trends across different industries toward business process automation and data-driven decision make the reliability of data quality critical for an organisation [4]. Techniques such as ML and AI are often highly sensitive to input data [5], subtle errors in the input data can cause significant inaccuracy no matter how good is the algorithm.

Thirdly, you may have to pay a scary large penalty bill due to your poor data quality:

China’s banking regulator has fined the country’s four major state-owned lenders for inconsistencies in their financial data reporting.

GBRR

Citi fined $400M over risk management, data governance issues

Banking Dive

Natures of Data Quality

Before jumping to the processes for improving data quality, we need to first understand what data quality is and why it is difficult to manage. The same set of data can be good data quality (i.e., fit to use) for one purpose, but can also be

No, Your Data is Not Bad, It is Just Not Useful

There is no such thing as “Good” data or “Bad” data. Data can only be evaluated as “fit to use” for a specific purpose or not fit to use for that purpose. Therefore the assessments of data quality have to be conducted in the context of how the data is expected to use. The same set of data can be “Good” (i.e., fit to use) for one purpose, but can also be “Bad” for another purpose.

For example, the same set of order transaction data, when it is used for demand prediction, even though a small number of transaction records missing, it may still fit to use for producing relatively accurate prediction. However, the same set of data will not be fit to use for monthly financial reports.

As we evaluate the quality of data as its fitness to use for a specific purpose, a couple of questions occurs. Firstly, how shall we quantify the fitness level and in what dimensions? On the other hand, can’t we just make our dataset universally fit all purpose? is that economically feasible or reasonable?

Those questions and the others raised in the rest of this section will form the core requirements for a data quality improvement process.

Data is not Static, so is Data Quality

The current enterprise data environment has become more and more complex where data is not static but continuously move about and evolve, from database schema changes due to source app redesign and upgrade, ill-maintained ETL pipelines, endless data migration and consolation efforts, to ungoverned self-service data usage. Subtle errors occurred in one place can trigger the butterfly effects and cause a widespread infections. Even worse those subtle errors introduced by changes in data can be very difficult to detect [2]. Based on my personal experiences, I cannot agree with this anymore, especially when the transformation/enrichment logics is complex or you have very limited access to the source databases.

Due to the dynamic attribute of data, data quality will not be static over time. As mentioned above, a single subtle error can trigger butterfly effects and cause data quality deteriorates unnoticeably. In addition, data represents the real world. While this real world is changing too fast, the meanings held by the data are changing, and the criteria judge data quality is changing as well.

Therefore, you should not be surprised to know that you are having serious data quality issues now when your data achieved high-quality score last week.

Data Quality Improvement Involves Too Many Parties, Even just in IT

It is well accepted in the data quality management community that data quality is not just a technical problem but also a business problem. IT and business have to work together with alignments on the data quality improvement goals and processes. The collaboration between IT and business is one of the main topics elaborated in data quality management literatures, such as [1][3]. However, I found few articles analyse the engineering challenges of data quality improvement within IT.

Data quality improvement is one of the most challenging jobs in IT. The root cause is that a running data system spans over too many territories owned by different teams which have different mindsets, different work priority and different “best practises” to do things. Just have a look at a data lineage diagram: there are the source databases where the team designs them is not the team operates them; for a large enterprise data environment, a messaging service is often deployed to decouple the operational databases and downstream systems which adds one team responsible for the messaging service and probably another team to maintain a set of APIs for integration; then there is the ETL team (often with the dependencies on other teams like MDM, infrastructure, 3rd parties vendors) for enriching and transforming the data to a status usable for end data user; and then there are the countless client analysis tools and languages. Not even solving a data issue during this process, just travel through the lineage and try to identify the issue is a pain.

For each territory along the data lineage paths, the data and the processing logics are safely protected within their borders. The people responsible for solving the data quality issue have often no access to investigate the data and code. In addition, the processing logic can be very complex and the used techniques can be very different between the territories. Therefore, you have to rely on the experts in that territory to look for the data quality issue. As that territory has its own work schedule and priority, you will find yourself to be in the “waiting” game. Even worse, the team who investigates your issue may have to wait for other teams to get some prerequisites ready before they can look into your issue. This is just one territory on your journey to find the DQ issue. If the issue is finally confirmed not from this territory. You have to move up along the data lineage paths, and do it all over again. Even worse, sometimes you have to revisit the territories you have passed.

Data Quality Requirements

From the analysis of data quality natures, we can see some high-level requirements for data quality improvements starting to pop up. As data quality can only be evaluated as “fit (or not fit) to use” for a specific purpose, data quality assessments have to be conducted in the context of how the data is expected to be used. In this case, a series of questions need to be considered when conducting a data quality assessment:

- How to measure the ‘fitness to use’ level of a set of data for a specific purpose, in what dimensions, and how to quantify the ‘fitness’ levels, and finally how to assess and monitor the effectiveness of the defined data quality assessment designs?

- The persona involved in a data quality assessment process. Who should define the data quality assessment rules, with the dependant supports from whom, and who should assess the effectiveness of the data quality assessment rules?

- Tooling supports for data quality assessment. Data quality assessment can be a daunting task that involves data profiling, data quality rule design and execution, and assessment results logging and analysis. What tooling features can make the process easier?

As mentioned earlier data is not static but continuously move about and evolve in an enterprise data environment. One-shot data quality assessment is meaningless when the assessed data is constantly changing. Due to the dynamic attributes of data, the data quality assessment needs to be ongoing and continuously so that it timely reflect the up-to-date status of the data quality.

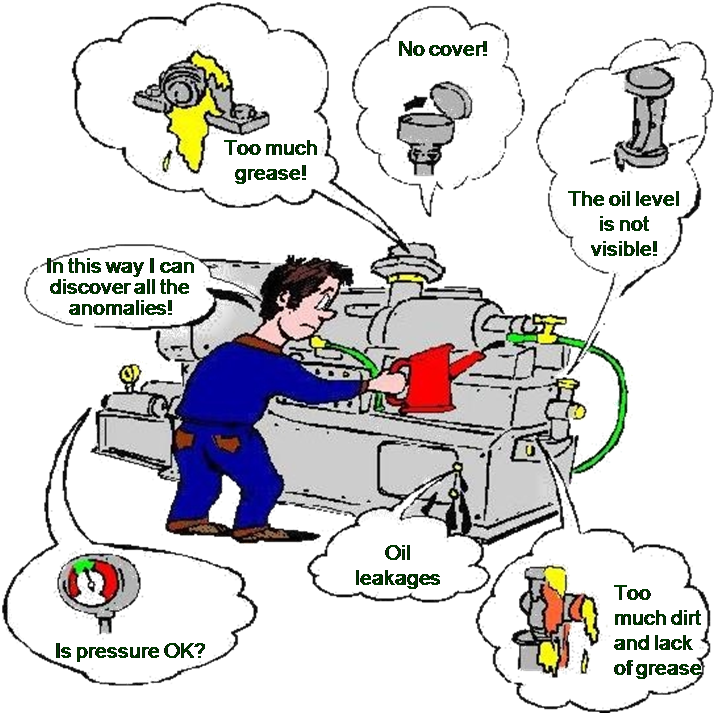

For data quality improvements, awareness is key. The ongoing monitoring of data quality is the drive of data quality improvements. For quality controls of other products, such as an oil rig or other machines, the quality issue is relative visible or easy to aware of. In comparison, the quality issues of data can stay much quieter and not easy to be aware of. You cannot simply blame an organisation for ignoring data quality issues if they are not aware of those data quality issues in the first place.

Compared to data quality assessment which focuses more on business analysis and process management, the requirements of ongoing data quality monitoring focuses more on engineering, for example:

- What components are required to implement a complete, configurable and maintainable data quality monitoring platform?

- Where to execute the assessment of data quality rules, at where the data is located or cached to and executed on the data quality monitoring platform?

- The data stores in a data system vary, they can be oracle, spark, data lake and many more. How to design an extendable, data stores independent data quality monitoring platform at the same time keeping good performance and scalability?

- Security and compliance considerations of data quality monitoring platform?

Last but not least, after we are aware of the data quality issues, the next step is to locate and solve the issues. As discussed above, solving a data quality issue can be challenging in an enterprise data environment. Fortunately, many other guys are feeling similar pains as well, and some of them, such as the DataOps community, start to practise new ways of work to tackle the pains. The data quality improvement process should be designed aligning those new ways of work and to be an integrated part.

References

[1] McGilvray, D. (2008) Executing Data Quality Projects: Ten Steps to Quality Data and Trusted Information, California: Morgan Kaufmann.

[2] Polyzotis, N., Roy S., Whang S. E., and Zinkevich M., Data management challenges in production machine learning. SIGMOD, 1723–1726, 2017

[3] Redman, T. 2008. Data Driven: Profiting from Your Most Important Business Asset. Cambridge, MA, USA: Harvard Business Press.

[4] Schelter, S., Lange, D., Schmidt, P., Celikel, M., Biessmann, F., & Grafberger, Automating large-scale

data quality verification, A. Proceedings of the VLDB Endowment, 11(12):1781–1794, VLDB Endowment, 2018.

[5] Sculley D., Holt G., Golovin D., Davydov E., Phillips T., Ebner D., Chaudhary V., Young M., Crespo J., and Dennison S. E., Hidden Technical Debt in Machine Learning Systems. NIPS, 2503–2511, 2015

Fantastic article! The focus on rule-based data quality assessment is spot on. Implementing clear, consistent rules to evaluate data quality is an effective strategy for ensuring accuracy and reliability. Your explanation of how these rules can be systematically applied to identify and rectify data issues is very insightful.